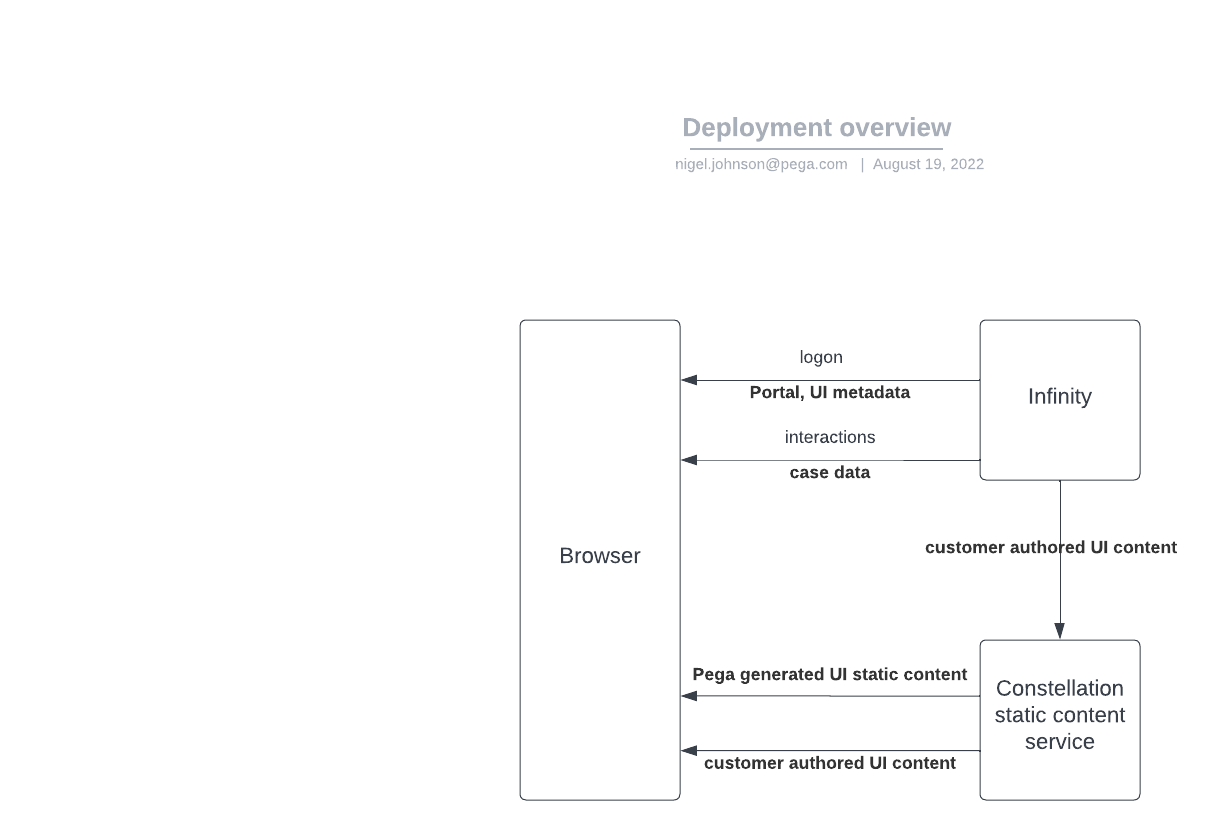

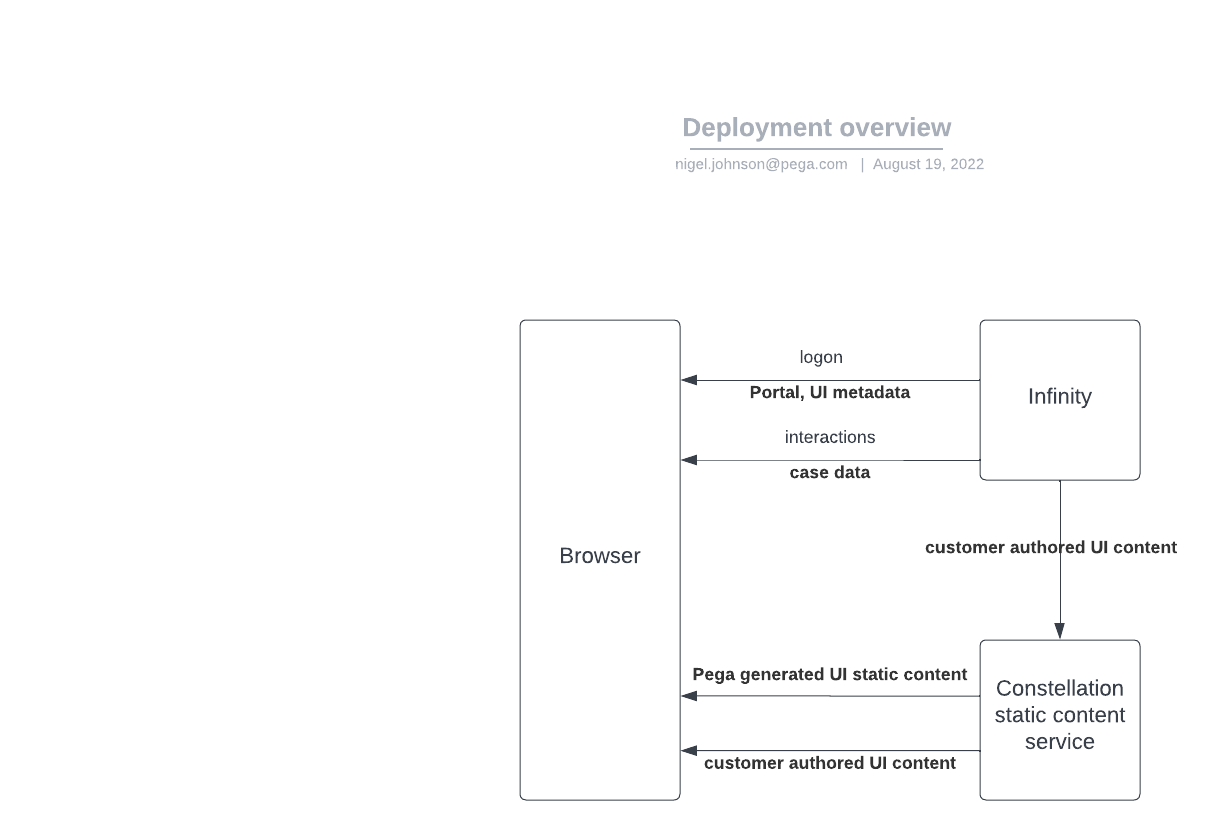

With Infinity 8.6-8.7 the Constellation UI static content used by the browser is stored in and served by a dedicated server - the Constellation Static Content Service. This allows a more rapid bug fix delivery process, and unloads the case engine from wasting threads on UI static content.

Within the Static Content service, content is stored in 2 distinct groups:

This is the js that takes the UI metadata and case values and builds the presentation on the client, and then manages interactions between the browser and the case engine. It is exactly the same for all customers, and tightly bound to the Infinity V2DxAPI's. This is placed in the service at build time.

As customers author their applications, they add customer and application specific UI assets (images, UI controls and localisations) to the presentation. These assets are pushed to the Static Content Service from Infinity, and then served to the UI on demand. Customer generated assets are all protected by security, and can only be accessed with a valid token for environment and application.

Example deployment architecture and data flows:

The Constellation Static Content service a simple webserver. It is designed to handle many Infinity deployments. Typically for an on-prem or Customer-Cloud install, 1 instance should be capable of handling requests from all Infinity deployments, production, stage, test and dev. for the organisation.

Pod autoscaling can be applied and multiple pods for HA.

Operational statistics (Prometheus) are exposed through the /metrics end-point.

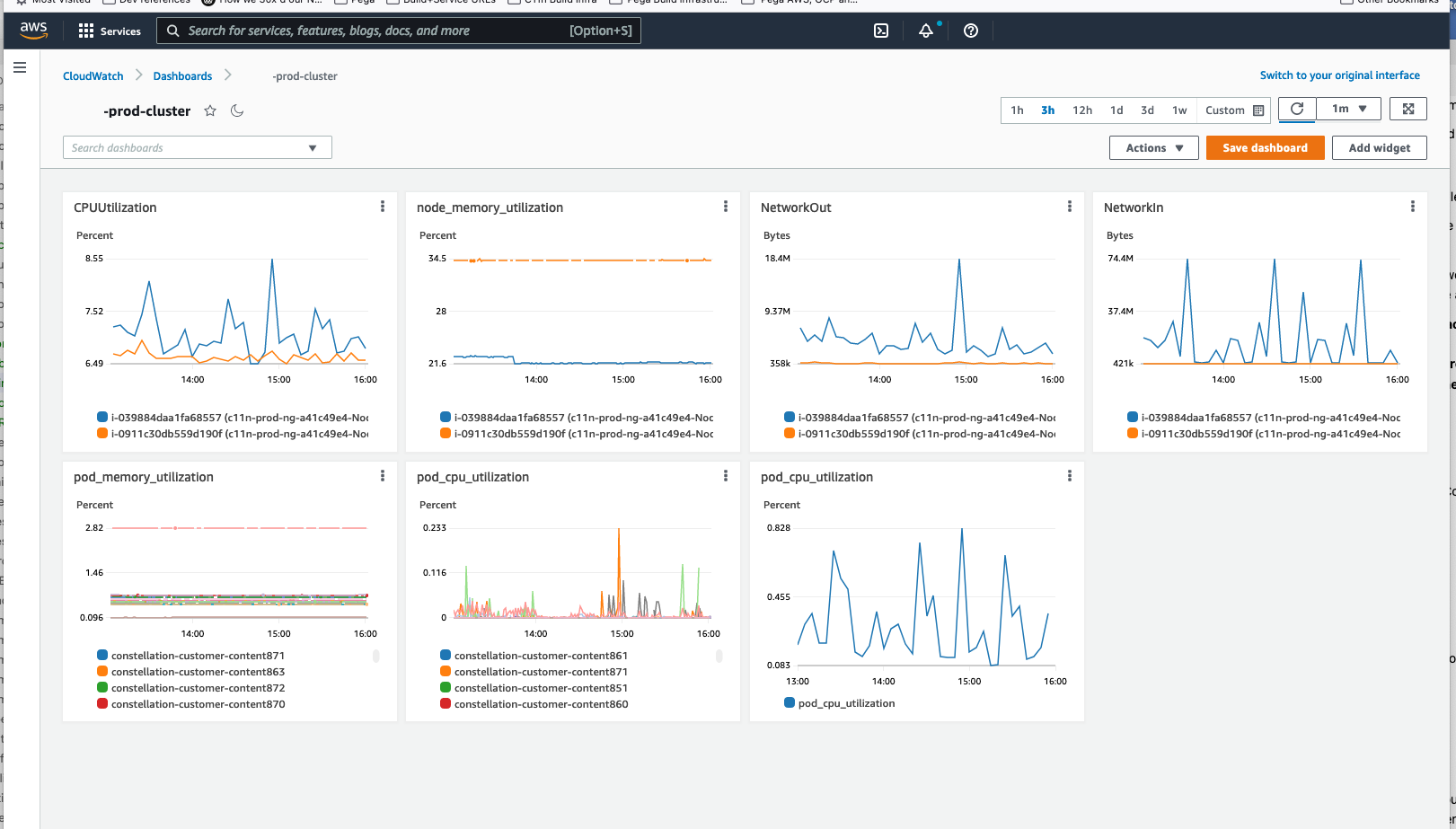

We run a single K8s pod to support all of Pega use (Infinity prod, stage, test and dev) on a lightly loaded T3.medium.

The service can be installed from a Docker image, from a K8s yaml file or from a helm chart.

For anything other than a simple single user desktop trial, it is easiest if the service should be exposed through a load balancer.

Integration with Infinity is completed by populating the Infinity DSS ConstellationSvcURL with the service public url.

Customer generated UI static assets (images, custome components, localisation files) are synchronised from Infinity to the Constellation Static service on a number of triggers within Infinity. In the simplest deployment, these assets are stored within the docker image.

Both of these problems are solved by using a shared disk across the containers. The shared disk can be a simple local drive, a network-file-system, or a K8s PVC. A NFS is simple, and also shares across data centers, very effective for HA. We use EFS for cross region availability. For K8s deployments, PV and PVC can be used.

The service is intended to be used in a wide variety of deployment architectures. There are a number of parameters that can be passed in, for both Docker and K8s deployments, to customise service operations:

Parameter details are in the Docker or K8s instructions.

The service api is exposed through the /swagger.html end-point.

This service is very reliable, and not much service maintenance or monitoring is required. Here is our monitoring dashboard:

All requests and unexpected operations are logged. The log is the first place to look when resolving issues.

There is a basic 'are you connected' http end point at /v860/ping. This should result in a http 200 response. For no response check the log to see if the request reached the service, then work back through the network to find the problem. While installing the service, checking the /v860/ping end-point with http can be very helpful with https issues.

A more detailed check of js and disk operation on the /v860/healthcheck end point.

The DxV2API on the case engine is https only. As browsers will not allow mixed https and http documents, https must be used for the Constellation Static service. The certificate must match the domain and be valid.

The UI asset synch end-point from Infinity is also https with Oauth authorisation. Http Java libraries are very brittle with https certificates: Obscure error messages will be generated in the Infinity log on customer asset synch to an endpoint with bad certificate. Domain must match and certificate must be valid.

One easy path to solving both of these issues is to put a good certificate on the load balancer.

The docker image provided by Pega is built from node:xx-alpine. This is a standard nodejs on Alpine image. This is a small image and excellent for purpose. Some customers prefer to build on their own images. This can be done, by taking the Pega provided image and transferring the Pega content of the image to the customers own image.

Custom docker image build instructions:

For image download, the highest build matching the release should be selected, or just use latest:

8.6.0-ga-18 / 8.6.0-ga-latest

8.6.1-ga-17 / 8.6.1-ga-latest

8.6.2-ga-32 / 8.6.2-ga-latest

8.6.3-ga-4 / 8.6.3-ga-latest

8.6.4-ga-4 / 8.6.4-ga-latest

8.6.5-ga-6 / 8.6.5-ga-latest

8.6.6-ga-5 / 8.6.6-ga-latest

8.7.0-ga-57 / 8.7.0-ga-latest

8.7.1-ga-37 / 8.7.1-ga-latest

8.7.2-ga-25 / 8.7.2-ga-latest

8.7.3-ga-55 / 8.7.3-ga-latest

8.7.4-ga-14 / 8.7.4-ga-latest